Human-robot interaction for Rehabilitation and Assistive Robotics

We are interested in bringing over to rehabilitation ideas, concepts, mechanisms, control systems, interaction strategies, ways to detect the intention, from robotics.

We use prostheses, exoskeletons and exo-suits as well as fully fledged robotic arms and virtual reality.

We also focus on interactive machine learning, sensors and the signals they provide, the physical attachment of sensors and actuators to the human body, and functional assessment.

Lastly, somato-sensory feedback is of great interest to us.

Responsible persons: Claudio, Marek, Fabio.

(Image: coordinated bimanual teleoperation of the TORO humanoid platform by a person with bilateral upper-limb absence. [Connan et al., Biomedical Physics & Engineering Express 2020])

Intent detection and somatosensory feedback

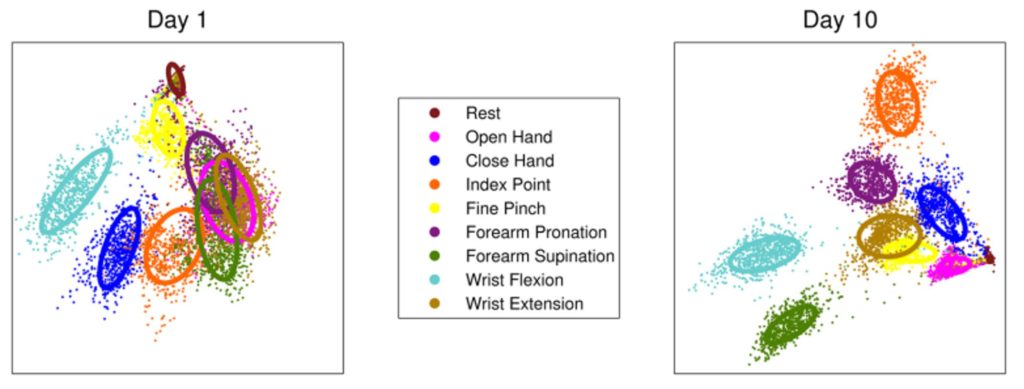

Signals generated by people while learning to use a tool change dramatically in time, and such a change goes hand-in-hand with improvement in control and acceptance.

Can we foster and exploit such a change in order to reach optimal control and full trust between man and machine?

Intent detection (the feed-forward path) and somatosensory feedback (the feedback path) are the two main components of our Human-Machine Interfaces and aim at this goal!

Responsible persons: Claudio, Marek, Fabio.

(Image: electromyographic signals produced by a user before and after learning to perform a prosthetic task. [Powell and Thakor, J Prosthet Orthot. 2013])

Movement Analysis

Tracking of human movement and body pose in real time is necessary in order to estimate the loads on individual joints and, by extension, to esimate the load on the muscles.

Tracking of human movement and body pose in real time is necessary in order to estimate the loads on individual joints and, by extension, to esimate the load on the muscles.

This is useful in order to counteract the limb position effect in prosthesis control, to estimate the intended impedance in telemanipulation scenarios, and to provide force feedback through functional electrical stimulation.

Responsible persons are Marek Sierotowicz and Marc-Anton Scheidl.

Virtual and Augmented Reality

The AIROB Lab applies VR to quickly and effectively evaluate certain aspects of the user-robot interaction, as well as to provide virtual therapy for phantom limb pain.

The AIROB Lab applies VR to quickly and effectively evaluate certain aspects of the user-robot interaction, as well as to provide virtual therapy for phantom limb pain.

Most of our experience builds upon the VITA setup from the German Aerospace Center. The AIROB lab produced a truly mobile version of this same concept, which can be used on any Android device together with a few wearables.

To know more, contact Fabio Egle or Marek Sierotowicz.

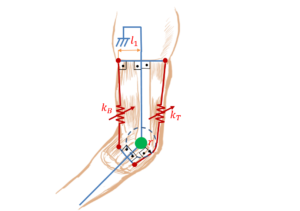

Functional Electrical Stimulation

Functional Electrical Stimulation causes movements or forces by inducing firing of motor neurons via injecting stimulation currents.

Functional Electrical Stimulation causes movements or forces by inducing firing of motor neurons via injecting stimulation currents.

The MyoCeption Functional Electrical Stimulation Force Feedback device is able to provide force feedback on an arbitrary number of joints through the injection of electrical currents. It is even able to outright control the user’s movements, which can be useful in spinal cord injury patients.

To know more, contact Marek Sierotowicz.

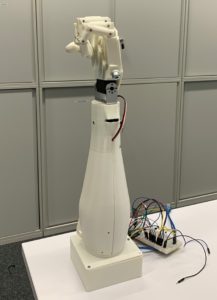

Upper-limb prosthetics

Upper-limb prostheses have undergone continuous technological development over the past decades. Today’s prostheses already partially exploit the possibilities of biosignal analysis and pattern recognition to use e.g. muscle activity in the form of surface electromyography (sEMG) to control the prosthesis.

Nevertheless, the rejection rate of electromyography-based interfaces remains very high. In the case of prostheses reasons for rejection often include HMI-related motives such as comfort, function, and control of the prosthesis. Consequently, many amputees use cosmetic or body-powered prostheses instead of the technologically advanced alternatives.

The AIROB Lab researches and works continuously towards solving the mentioned problems, especially in the context of human-machine-interaction (HMI).

The focus here is in particular the improvement of intent detection through sensor modalities, biosignal analysis and machine learning for the control of prostheses.

Responsible persons: Claudio, Fabio.

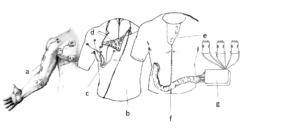

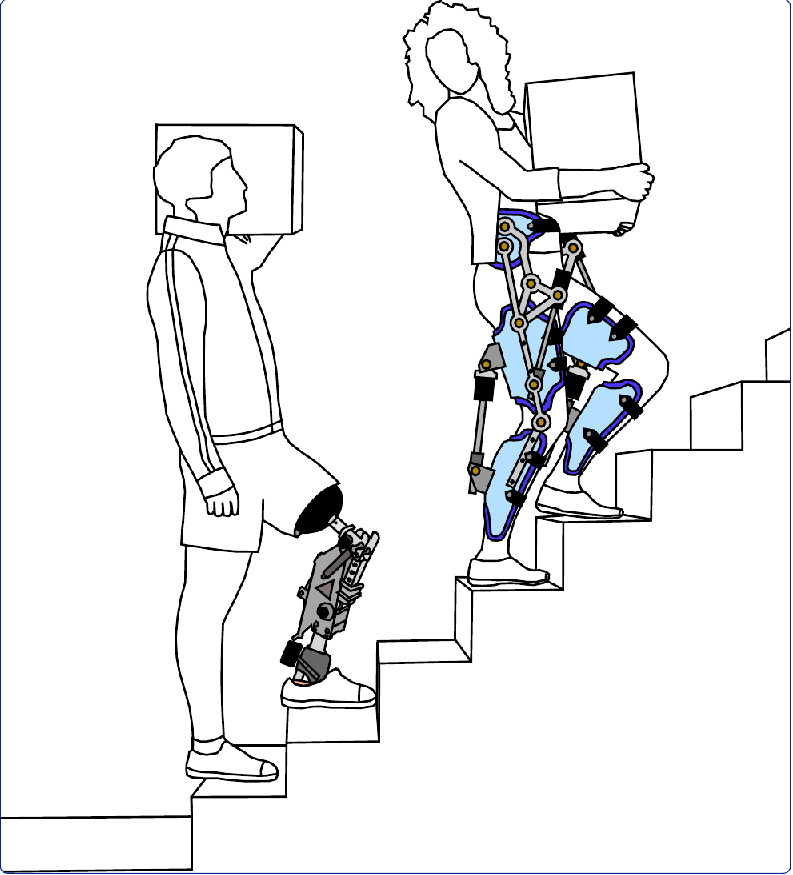

Lower-limb rehabilitation and prosthetics

Rehabilitation and assistive devices for the lower limbs have been around for centuries. Starting from passive weight-bearing structures, technological improvements allowed for more and more sophisticated devices.

The AIROB Lab aims to make the usage of assistive and rehabilitation devices (e.g., Prostheses) as robust and intuitive as possible.

Recent developments in sensor technologies, machine learning and signal analysis enable us to bridge the gap between the human being and the robotic devices.

Our research improves the life of amputees and those relying on rehabilitation devices by correctly identifying their intents of movement.